A Lesson in Investigative Research and the Power of Data

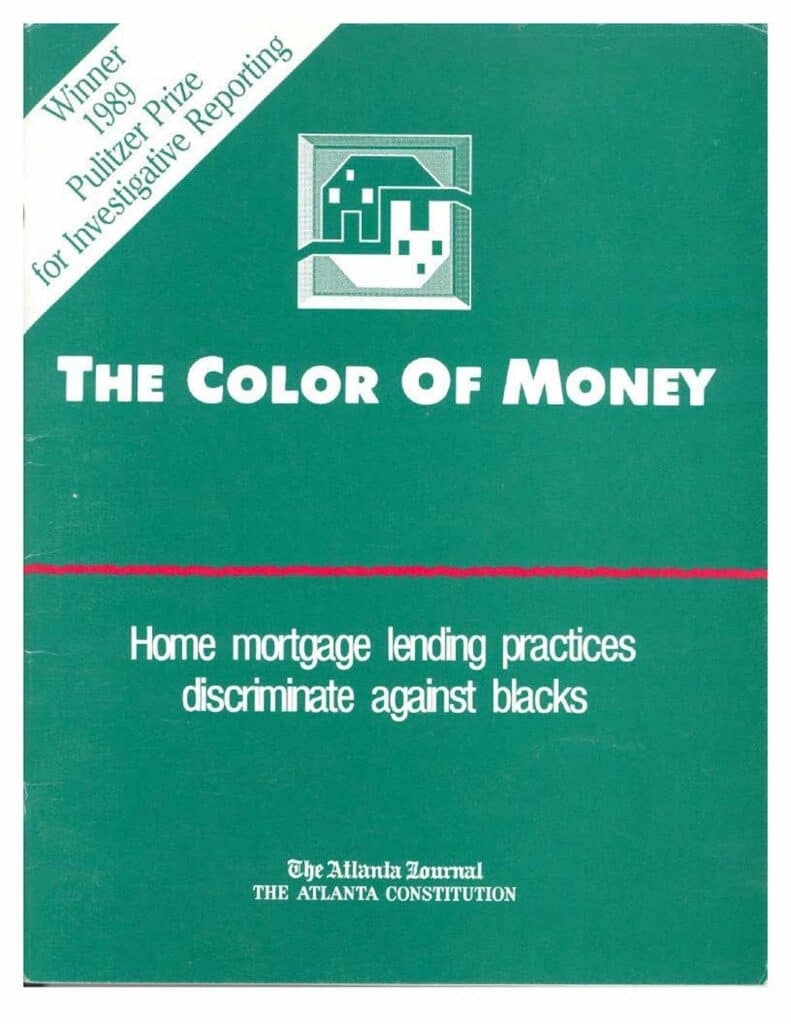

An investigative reporter at the Atlanta Journal-Constitution proved something that no interview, no anecdote, and no amount of firsthand testimony had been able to establish: that Atlanta’s lending institutions were systematically denying mortgage loans to Black borrowers — not because of creditworthiness, but because of race. He proved it with data. That story won the Pulitzer Prize. And it changed how I understood what rigorous research could do.

I built Intellerati on that lesson.

Investigative Research and the Power of Data

Investigative recruiting research, combined with data analytics, has the power to transform executive recruiting. The Executive Search Information Exchange advises Fortune 500 companies on how best to utilize retained executive search firms. Its corporate members have revealed that 40% of traditional retained executive searches fail to complete. Intellerati sees that as an opportunity. Research is the execution engine of executive search. It is how you uncover and ultimately deliver game-changing hires. By harnessing the power of investigative research, we can help fix what is broken about retained executive search. I founded Intellerati, determined to do just that.

My data analytics turning point came when I learned about investigative reporter Bill Dedman’s work in an Atlanta Journal-Constitution investigation titled “The Color of Money.” Dedman’s reporting was so ground-breaking that it received journalism’s highest honor, a Pulitzer Prize. The thing that amazed me then — and still blows my mind today — is that Dedman figured out a way to prove something that had been impossible to prove before.

Lending Redlining and Racial Discrimination

Before that series, reporters could never prove that lending institutions in Atlanta were racially discriminating against prospective borrowers. Lenders would refuse to extend credit to borrowers in an illegal practice known as redlining, as if they had drawn a red line around areas more heavily populated by racial minorities. People living inside those red-lined areas were denied credit or charged exorbitant interest rates.

Before Dedman’s series, all a reporter could do was offer anecdotal evidence — interviews with Black borrowers with excellent credit and high income who had been turned down. Of course, the lender denied that race played any role. As long as they could get away with the illegal practice, it would continue.

Dedman found a different way in. He obtained Home Mortgage Disclosure Act data — federal records that lending institutions were required to file — and analyzed loan approval rates by neighborhood and by race. The data did not just suggest discrimination. It proved it, systematically and irrefutably, across institution after institution. The story ran. The Pulitzer followed. Laws changed.

What This Has to Do with Executive Search

The lesson I took from Dedman was not about mortgage lending. It was about what data can do that no other research method can.

An anecdote tells you what one person experienced. Data tells you what is true across the universe contained in a data set. When you structure the right data and ask it a precise question, it answers with a kind of authority that observation, intuition, and conventional wisdom cannot match or rebut.

I brought that discipline into executive search because the field has the same problem journalism had before computer-assisted reporting: important questions that conventional methods could not answer. Is this the complete universe of viable candidates at this target company? Are we missing anyone? Where, exactly, is the talent we need — and how do we know we have found all of it?

Those are data questions. They require a data methodology to answer.

From Newsroom to Neural Network: Data Discipline in Executive Search Research Matters

Bill Dedman proved something that feels obvious now but was genuinely radical then: data does not just describe the world. Properly assembled and rigorously analyzed, it proves things that no amount of observation, interview, or intuition can establish on its own.

That insight has only become more consequential. Data is now the raw material of artificial intelligence. Every AI recruiting tool on the market — every matching algorithm, every candidate ranking system, every agentic sourcing assistant promising to find the talent you missed — was trained on data. The quality of what those systems surface is determined entirely by the quality, completeness, and structure of the data they were trained on.

This is where the newsroom lesson applies directly. Dedman did not just gather data. He gathered the right data, structured it rigorously, and asked it a question precise enough to produce a provable answer. Sloppy data would have produced a sloppy story — or worse, a wrong one published with confidence.

AI recruiting tools face exactly this problem. LinkedIn’s 1.3 billion member profiles are the primary training and sourcing dataset for most of these systems. Those profiles are self-reported, inconsistently structured, unverified, and increasingly optimized by candidates using AI to game recruiter searches. Feed that data into a matching algorithm, and you get a faster version of the same incomplete picture. The LinkedIn mirage becomes more convincing. The missed candidates stay missed.

The first critical step in this form of investigative research methodology is to ask, “Where is the data?” You might think it in LinkedIn, but often there are other, better sources of information. Consider what kinds of public databases are available that might offer you a shortcut — many of them are unindexed on the web. But some of those public records might hold critical information that would hasten your search for candidates. When you harness the discipline of knowing how to think about data, where it might be, going to primary sources, verifying what you find, mapping target companies, and ultimately uncovering viable candidates that would have been undiscovered — you are producing the candidate data that AI can actually use. Garbage in, garbage out remains as true today as it was when computing was first invented. Only now we have more garbage (AI slop) and false profiles generated at scale.

That is why Intellerati exists. Not to replace AI tools, but to supply the research discipline that makes them trustworthy — and to find the candidates that no algorithm, however well-funded, is currently designed to surface.

For more information: